西 南 交 通 大 学 学 报

第 55 卷第 1 期

2020

年 2 月

JOURNAL OF SOUTHWEST JIAOTONG UNIVERSITY

Vol. 55 No. 1

Feb. 2020

ISSN: 0258-2724 DOI:10.35741/issn.0258-2724.55.1.30

Research ArticleComputer and Information Science

A

R

ECOGNITION

S

YSTEM FOR

S

UBJECTS

'

S

IGNATURE

U

SING THE

S

PATIAL

D

ISTRIBUTION OF

S

IGNATURE

B

ODY

Zainab J. Ahmeda,*, Loay E. Georgeb

aDepartment of Biology Science, College of Science, University of Baghdad,

Baghdad, Iraq, zainabjahmed83@gmail.com

bUniversity of Information Technology and Communication,

Baghdad, Iraq, loayedwar57@scbaghdad.edu.iq

Abstract

This investigation proposed an identification system of offline signature by utilizing rotation compensation depending on the features that were saved in the database. The proposed system contains five principle stages, they are: (1) data acquisition, (2) signature data file loading, (3) signature preprocessing, (4) feature extraction, and (5) feature matching. The feature extraction includes determination of the center point coordinates, and the angle for rotation compensation (θ), implementation of rotation compensation, determination of discriminating features and statistical condition. During this work seven essential collections of features are utilized to acquire the characteristics: (i) density (D), (ii) average (A), (iii) standard deviation (S) and integrated between them (iv) density and average (DA), (v) density and standard deviation (DS), (vi) average and standard deviation (AS), and finally (vii) density with average and standard deviation (DAS). The determined values of features are assembled in a feature vector used to distinguish signatures belonging to different persons. The utilized two Euclidean distance measures for matching stage are: (i) normalized mean absolute distance (nMAD) (ii) normalized mean squared distance (nMSD). The suggested system is tested by a public dataset collect from 612 images of handwritten signatures. The best recognition rate (i.e., 98.9%) is achieved in the proposed system using number of blocks (21×21) in density feature set. With the same number of blocks (i.e., 21×21) the maximum verification accuracy obtained is (100%).

Keywords:Fingerprint, Biometric, Identification, Verification, Energy, Haar Wavelet

摘要: 这项研究提出了一种脱机签名的识别系统,该系统通过根据数据库中保存的特征利用旋转 补偿来实现。所提出的系统包括五个主要阶段,它们是:(1)数据获取,(2)签名数据文件加 载,(3)签名预处理,(4)特征提取和(5)特征匹配。特征提取包括确定中心点坐标和用于旋 转补偿的角度(θ),实施旋转补偿,确定区分特征和统计条件。在这项工作中,使用了七个基 本特征集来获取特征:(i)密度(D),(ii)平均值(A),(iii)标准偏差(S)并在它们之 间进行积分(iv)密度和平均值( DA),(v)密度和标准偏差(DS),(vi)平均值和标准偏 差(AS),最后(vii)带有平均值和标准偏差(DAS)的密度。确定的特征值被组合在特征向量

中,该特征向量用于区分属于不同人的签名。用于匹配阶段的两个欧氏距离度量是:(i)归一化 平均绝对距离(nMAD)(ii)归一化均方距离(nMSD)。通过从 612 个手写签名图像中收集的 公共数据集对建议的系统进行测试。在所提出的系统中,使用密度特征集中的块数(21×21)实 现了最佳识别率(即 98.9%)。在相同的块数(即 21×21)下,获得的最大验证精度为(100%) 。 关键词:指纹,生物识别,识别,验证,能量,Haar 小波

I. I

NTRODUCTIONThere are many ways enabling to determine the authenticity of one’s personal information, beginning from signature to fingerprint. Signature is a sign as a symbol of the name written by the hand and by the person himself as a personal marker. Typically, the signatures are utilized in information identification and verification either in corporations, government, schools, hospitals, banks, and much more [1]. Within the signature recognition task; there are two main streams: first methodology models the signing person; it needs discovery information and then it is able to recognize the signature being the output of the system and it is important to form the signature. The other methodology is to take the signature such as a static two-dimensional image that does not contain any information about the time [2]. Handwritten signature recognition can be divided into: (i) the on-line recognition (i.e., dynamic) and (ii) the off-line recognition (i.e.; static) recognition. An on-line system implies a procedure when the signer takes a special pen called a stylus to do the signature, creating (i)the pen locations, (ii)pressures and (iii)speeds [3]. An off-line signature system utilizes features taken from scanned signature image. These features are very easy because just the pixel image needs to be estimated. However, the off-line systems are complex to styling as many desirable features for example: the order of strokes, the rapidity, and other dynamic information are not available in the off-line case [4].

Many techniques evolved in the signature recognition part that are related to offline signature recognition and verification problem. A signature recognition process proposed in [5] depends on wavelet transform average framing entropy (AFE) and a probabilistic neural network (PNN). The system was tested using: (i) a discrete wavelet transforms (DWT) entropy indicated as DWT entropy neural network system (DWENN) and (ii) using wavelet packet (WP) entropy indicated as a WP entropy neural network system (WPENN). The algorithm was

tested using two databases. The WPENN accomplished the best recognition rate result with the threshold entropy amounting to 92%.

An effective fuzzy Kohonen clustering networks (EFKCN) algorithm was used in [6] to suggest an offline signature recognition and verification technique. This system has five stages that consist of: (i) data acquisition, (ii) image processing, (iii) data normalization, (iv) clustering, and (v) evaluation. The recognition of offline signature system utilizing the clustering and the EFKCN algorithm presents improvement in the result with 70% accuracy. In this study good signature recognition improves the result to support the verification system and the personal data verification system.

A new technique was proposed in [7] to recognize handwritten signature in an offline manner. The centroids of two local binary vectors (i.e.; horizontal and the vertical vector) are computed. Three various tests are performed in this technique: (i) soft valuation test, (ii) hard valuation test, and (iii) a comparison test. The outcomes of the three types of tests are good. It arrived in the combined test to 94.8275% of success rate for 928 digital images of handwritten signatures.

A set of simple shapes was used in [8] depending on geometric features for offline verification of signatures. The features applied are orientation, area, eccentricity, standard deviation, centroid, kurtosis, skewness, and Euler’s number. Preprocessing of a scanned image was applied before extracting the features to separate the signature portion and to take out any spurious noise exiting. At first the system is trained utilizing a database of signatures acquired from persons whose signatures have to be authenticated by the system. Artificial neural network (ANN) is applied in recognition and verification of signatures: whether they are actual or faked. The results display that the method is robust and obviously distinguishes between actual or faked signatures. The effectiveness is 86.67% with a threshold of 80%.

3

The target of this research is to recognize and verify the offline handwritten signatures by a set of local features on rotation signature image to obtain high recognition and verification. The framework of this paper comes as the following: The suggested system characterization is introduced in Section 2. Experimental setup and acquired results are demonstrated in Section 3. At last, concluding remarks are given in Section 4.

II. P

ROPOSEDM

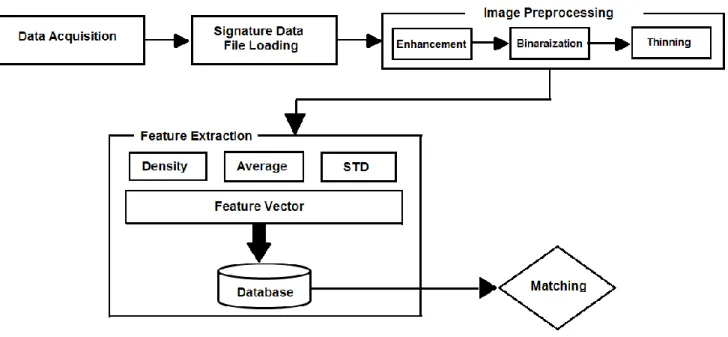

ETHODOLOGYThe framework of the signature recognition and verification system is shown in Figure 1; in general, it is divided into three subparts: (A) data acquisition, (B) signature data file loading, (C) signature preprocessing, (D) feature extraction, and (E) feature matching.

A. Data Acquisition

Data acquisition was examined by CEDAR dataset of handwritten signature images. The data set consists of 55 different signers and 6 signatures are taken only for each person where as the residual (47 different signers) were obtained from computer science department at the University of Baghdad, when every person was asked to draw his signature for 6 times then these signatures were used as class samples for that person. Thus, the total number of samples is 612 signatures acquired from 102 persons. B. Signature Data File Loading

The bands of the input BMP image are obtained after it is converted into the gray scale intensity signature image.

C. Signature Preprocessing

Various image processing algorithms are utilized to process the camera-captured or scanned real world images including human signatures. Preprocessing algorithm is a data conditioning algorithm, an image passes over various processing levels before it is prepared to supply data for the feature extraction process. Preprocessing is the initial stage in the signature recognition and verification system. Any image processing application has problems because of the noise such as: isolated pixels, smeared images and touching line segments. The serious distortions in the digital image occur because of this noise; thus, a correspondingly bad recognition and verification rate and the results

refer to the ambiguous features. The preprocessing step is implemented in training and experimenting stages. The sub steps include signature enhancement, binarization, and thinning. The preprocessing stage is identical to that mentioned in [9-10]. These processes are explained below:

1) Enhancement

The image enhancement step is performed to obtain more image feature clearness and to enhance some important features of the image for further processing or to improve the image data that includes undesired distortions: (a) inverting the color, (b) brightness stretching, (c) segmentation, (d) image de-noisingusing median filter and (e) smoothing using mean filter.

2) Binarization

As an outcome of binarization, a gray scale signature image is converted into a binary image. The region of interest will be referred to white while the background will be referred to black. It is only to allocate the gray image pixel that has a value greater than 0, with 255 being a value of 1. 3) Thinning

The thinning process is to make the thickness of allocated signature more uniform while keeping the major features of the lines network. Thinning is implemented only to a binary image, and another binary image is produced as an output. This thinning process is achieved by Zhang thinning algorithm [11].

D. Feature Extraction

The prime reason to improve an offline signature recognition system is the feature extraction. Just the key information for signature is extracted from the images; the most special features representing the pattern must be extracted. To differentiate each person from others, the extracted features are combined in a feature vector. Three different sets of differentiated features are used in the proposed system: (i) the density of signature lines; (ii) average of x-projection and y-projection; and (iii) a standard deviation of x-projection and y-projection and their combination (i.e., DA, DS, AS, DAS). Then the extracted feature vector is saved in the database for the matching stage or recognition stage purpose. To extract this set of features from the signature image, the following steps have been performed:

Figure 1. The overall framework of the proposed system

1) Locate the center point coordinates

Two different ways are employed to locate the center coordinates:

a) Locate the center coordinates of the signature lines binary image (i.e., the average coordinates of the signature lines points). The coordinates are located using the following equations: 𝑥𝑐= 1 𝑊𝐻∑ ∑ 𝑥 𝐼[𝑥, 𝑦] 𝑊 𝑥=0 𝐻 𝑦=0 (1𝑎) 𝑦𝑐= 1 𝑊𝐻∑ ∑ 𝑦 𝐼[𝑥, 𝑦] 𝑊 𝑥=0 𝐻 𝑦=0 (1𝑏)

where W & H are the width and height, I() is a signature image after preprocessing stage.

b) Boundary points-based method: Locate the center as the mean point between the left and right edges of the signature region for x-coordinate, and between the top and bottom edges of the signature region for the y-coordinates: 𝑥𝑐= 1 2(𝑥𝑙𝑒𝑓𝑡𝑒𝑑𝑔𝑒 + 𝑥𝑟𝑖𝑔ℎ𝑡𝑒𝑑𝑔𝑒) (2. 𝑎) 𝑦𝑐= 1 2(𝑦𝑡𝑜𝑝𝑒𝑑𝑔𝑒 + 𝑦𝑏𝑜𝑡𝑡𝑜𝑚𝑒𝑑𝑔𝑒) (2. 𝑏)

The edges (x left_edge and x right_edge) are the first and last column of the palm image hold line points; and (y top_edge and x bottom_edge) are the first and last rows of the image hold line points.

2) Determine the angle for rotation compensation (θ)

Each image must be aligned by a particular reference direction in order to get good matching of extracted features from the samples of signature image. Thus, a compensation angle must be located to ensure correct alignment for the tested signature image. The model utilized for the angle determination is based on the principal component analysis (PCA) [12]. The binary two-dimensional matrix of the binary line signature is used to determine the compensation angle, this angle is calculated by the following equation: 𝜃 =1 2𝐴𝑡𝑎𝑛2(𝑆, 𝐶)(3) 𝑆 = 2 ∑ ∑(𝑥 − 𝑥𝑐)(𝑦 − 𝑦𝑐) 𝐼[𝑥, 𝑦] 𝑊 𝑥=0 𝐻 𝑦=0 (4𝑎) 𝐶 = ∑𝐻𝑦=0∑𝑊𝑥=0((𝑦 − 𝑦𝑐)2 2) 𝐼[𝑥, 𝑦](4𝑏),

where (xc, yc) are center coordinates, θ is the

rotation angle value, and I() is a signature image after the preprocessing stage.

3) Implementation of rotation compensation The compensation of the signature line image is rotated by the angle (θ) in order to make the desired rotation. Thus, a new image array is calculated by the following rotation transform equations:

𝑥′ = (𝑥 − 𝑥𝑐)𝑐𝑜𝑠𝜃 − (𝑦 − 𝑦𝑐)𝑠𝑖𝑛𝜃 + 𝑥𝑐(5𝑎) 𝑦′ = (𝑥 − 𝑥𝑐)𝑠𝑖𝑛𝜃 + (𝑦 − 𝑦𝑐)𝑐𝑜𝑠𝜃 + 𝑦𝑐(5𝑏),

5

where (x', y') are the new coordinates, θ is the value of the rotation angle.

4) Determination of discriminating features Some important mathematical equations are used to determine the feature values in this step. The determined feature values for each block are ordered in a feature vector, illustrating the considered signature image sample. The extracted feature vectors are recorded in the database for the matching process and for the recognition or verification process. The adopted features of three types are:

a) the line density (Den) for each block (i, j): 𝐷𝑒𝑛(𝑥, 𝑦) = ∑ ∑ 𝑅𝑖𝑚𝑔(𝑥, 𝑦) 𝑥𝑒 𝑥=𝑥𝑠 𝑦𝑒 𝑦=𝑦𝑠 (𝑥𝑒− 𝑥𝑠+ 1)(𝑦𝑒− 𝑦𝑠+ 1) (6)

where Den (x, y) is the value of line points density in the (x, y)-th block, Rimg is the rotation

signature image.

b) Average values of line projections for each block: 𝐴𝑣𝑟(𝑥, 𝑦) = 1 𝑁 ∑ ∑ 𝑅𝑖𝑚𝑔(𝑥, 𝑦) 𝑥=𝑥𝑒 𝑥=𝑥𝑠 𝑦=𝑦𝑒 𝑦=𝑦𝑠 (7)

c) Standard deviation of line projections for each block: 𝜎(𝑥, 𝑦) = √1 𝑁 ∑ ∑ (𝑅𝑖𝑚𝑔(𝑥, 𝑦) − 𝐴𝑣𝑟(𝑥, 𝑦)) 2 𝑥=𝑥𝑒 𝑥=𝑥𝑠 𝑦=𝑦𝑒 𝑦=𝑦𝑠 (8)

where N is the total number of a pixel in the block, Rimg is the rotation signature image.

5) Determination of a Statistical Condition This step was applied to remove abnormal cases in features and to improve results. These conditions are: a) When σ (p, f) ≤ 0.1 × 𝐹̅(𝑝, 𝑓) then: 𝜎 (𝑝, 𝑓) = 0.1 × 𝐹̅(𝑝, 𝑓) (9) b) When σ (p, f) ≤ 1 5 × σA (p) then: 𝜎 (𝑝, 𝑓) = 1 5 × 𝜎𝐴(p)(10),

where, (p, f) are the person number, and feature number. F̅ is the mean feature vector for each person, σ is the corresponding standard deviation; and σA is the average standard deviation for each person.

E. Matching

This step is the most important portion for all biometric systems. It is used to determine the degree of similarity between two signatures. The Normalized Mean Absolute Distance (n MAD) metric and Normalized Mean Square Differences (n MSD) metric are used to determine the degree of similarity. They are defined as follows [14]:

1) Normalized Mean Absolute Differences 𝑛𝑀𝐴𝐷(𝑝, 𝑓 ) = ∑ |(𝐹(𝑝, 𝑖, 𝑓) − 𝐹̅(𝑝, 𝑓)

𝜎(𝑝, 𝑓) | (11) 2) Normalized Mean Square Differences 𝑛𝑀𝑆𝐷(𝑝, 𝑓 ) = ∑ (𝐹(𝑝, 𝑖, 𝑓) − 𝐹̅(𝑝, 𝑓)

𝜎(𝑝, 𝑓) ) 2

(12) where F is the feature vector of a tested pattern sample, 𝐹̅ is the feature vector of a class template, and σ is the standard deviation vector.

III. T

ESTR

ESULTSThe performance evaluation was separated into two parts: (i) test the recognition efficiency and (ii) test the verification performance:

A. Recognition Results

The performance was estimated by locating the Correct Recognition Rate (CRR)using the following equation:

𝐶𝑅𝑅 =𝑁𝐶𝑜𝑟𝑟𝑒𝑐𝑡 𝑁𝑇𝑟𝑖𝑒𝑑

(13)

where (n correct) is the number of correct

recognition decisions and (nT) is the total number

of the tried tests.

There are primarily two parameters that may impact the recognition results: the (i) number of blocks explaining the extracted signature image during the processing stage, and (ii) the method used to adopt center reference point of the signature. Table 1 shows the two proposed main centroid methods and for the seven feature vectors combinations (i.e., Density 'D'; average 'A'; standard deviation 'S'; density+ average 'DA'; density+ standard deviation 'DS'; average+ standard deviation 'AS'; and density+ average+ standard deviation 'DAS'), and for the two types of matching (normalized mean absolute distance 'n MAD', normalized mean square distance 'n MSD').

Table 1 shows the highest recognition rate (i.e., 98.9%) when using number of blocks (21×21) and density feature set in the first centroid method using nMSD for matching.

Table 1.

The Recognition rate

Block size Features Combination Features

D A S DA DS AS DAS 5×5 38.7 32.4 78.1 41.0 84.8 51.6 58.7 7×7 47.4 43.0 88.7 57.4 91.2 68.5 75.3 9×9 69.0 66.7 93.6 75.8 93.6 83.8 86.8 11×11 78.8 80.1 94.3 87.3 94.1 91.7 93.0 13×13 88.6 88.2 95.8 92.3 95.8 94.3 94.4 5×15 92.6 93.1 95.4 94.3 95.8 95.3 95.8 17×17 95.4 94.9 95.9 95.4 95.9 95.9 95.9 19×19 96.7 95.6 95.9 95.8 96.1 96.1 96.1 21×21 97.9 94.1 94.1 94.1 94.1 94.1 94.1 nMAD

Block size Features Combination Features

D A S DA DS AS DAS 5×5 36.4 28.3 83.0 34.8 89.1 40.0 44.8 7×7 50.5 27.8 90.5 35.1 93.6 38.4 47.9 9×9 71.7 44.1 94.0 50.7 95.8 59.2 63.7 11×11 84.5 55.7 95.1 61.9 95.3 69.9 74.0 13×13 94.6 67.2 95.6 75.2 95.9 80.4 83.3 15×15 96.9 81.7 95.9 85.1 95.9 87.3 89.5 17×17 96.9 89.2 96.1 91.3 96.1 92.8 94.0 19×19 97.9 93.0 96.1 94.1 96.1 94.9 95.3 21×21 98.9 92.3 94.1 93.3 94.1 93.8 93.8 nMSD

a. First center reference method

Block size Features Combination Features

D A S DA DS AS DAS 5×5 30.9 34.5 73.7 42.3 80.1 46.2 52.8 7×7 50.5 37.6 87.6 49.2 88.4 59.6 67.5 9×9 66.0 64.2 92.5 74.0 93.5 80.6 84.0 11×11 78.6 79.4 94.8 85.1 94.9 89.5 91.0 13×13 83.8 85.5 95.4 89.4 95.6 93.0 94.0 15×15 89.4 91.8 96.4 94.0 96.2 95.3 95.3 17×17 92.2 93.1 95.3 94.3 94.9 95.1 94.9 19×19 94.8 94.4 94.8 94.4 94.6 94.4 94.6 21×21 95.8 94.9 94.9 94.9 94.9 94.9 94.9 nMAD

Block size Features Combination Features

D A S DA DS AS DAS 5×5 30.2 27.0 79.2 32.2 85.9 33.0 38.7 7×7 50.7 23.4 88.9 30.1 92.5 32.4 38.7 9×9 71.1 44.9 94.3 53.9 95.9 54.1 59.8 11×11 86.1 61.3 96.4 66.5 96.7 70.9 74.2 13×13 90.7 67.8 97.4 73.4 97.5 77.5 81.4 15×15 94.9 79.7 96.9 83.7 97.1 85.1 88.4 17×17 97.5 85.0 96.1 88.4 96.1 90.0 91.7 19×19 98.5 89.1 95.1 91.5 95.1 92.6 93.6 21×21 98.7 92.6 95.1 94.0 95.1 94.4 94.6 nMSD

b. Second center reference method

B. Verification (Authentication) Results

There are two criteria used to measure the performance of the signature verification: false acceptance rate (FAR) and false rejection rate (FRR). If the forgery signatures are accepted, then it is called false acceptance as real, while if real signatures are accepted as forgery, then it is called false rejection. The accuracy of the system

is the mean between percentage of real signatures verified as real and percentage of forgery signatures verified as forgery [15,16].

𝐹𝐴𝑅 = ( 𝑁𝑢𝑚𝑏𝑒𝑟 𝑜𝑓 𝑎𝑐𝑐𝑒𝑝𝑡𝑒𝑑 𝑖𝑚𝑝𝑜𝑠𝑡𝑒𝑟 𝑇𝑜𝑡𝑎𝑙 𝑛𝑢𝑚𝑏𝑒𝑟 𝑜𝑓 𝑖𝑚𝑝𝑜𝑠𝑡𝑒𝑟 𝑎𝑐𝑐𝑒𝑠𝑠)

7 𝐹𝑅𝑅 = ( 𝑁𝑢𝑚𝑏𝑒𝑟 𝑜𝑓 𝑟𝑒𝑗𝑒𝑐𝑡𝑒𝑑 𝑔𝑒𝑛𝑢𝑖𝑛𝑒 𝑇𝑜𝑡𝑎𝑙 𝑛𝑢𝑚𝑏𝑒𝑟 𝑜𝑓 𝑔𝑒𝑛𝑢𝑖𝑛𝑒 𝑎𝑐𝑐𝑒𝑠𝑠) ∗ 100%(15) 𝐴𝑐𝑐𝑢𝑟𝑎𝑐𝑦 =(𝑇𝑃 + 𝑇𝑁) 𝑃 + 𝑁 (16) where we have positive (P), negative (N), true positive (TP) and true negative (TN).

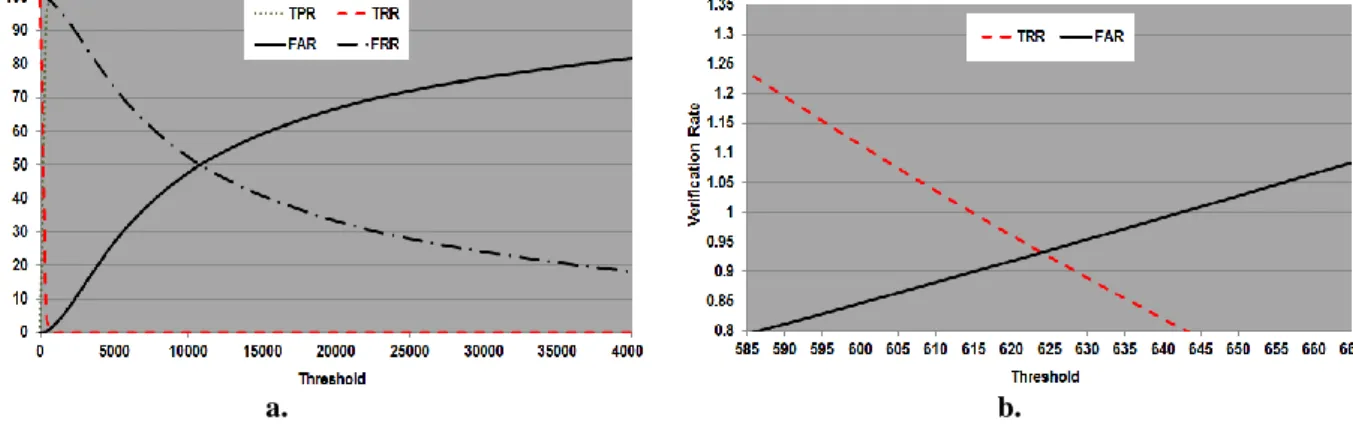

The parameters that cause the best recognition rate which was done using nMSD, density feature (D), first centroid method and block (21×21);

these are used to test the verification performance. The results show the dependency of the verification rates upon the similarity distance threshold value as displayed in Figure 2a. The ROC curve between the FAR and FRR for various threshold values is displayed in Figure 2b. The results of FAR, FRR and accuracy versus different threshold values are given in Table 2. The results show the best accuracy percentage 100%.

a. b.

Figure 2. a)The verification rates against the distance threshold values b) The ORC curve

Table 2.

The FAR, FRR and accuracy with various threshold values

Threshold FPR % FRR % Accuracy % 1574924.69 99.9336698 0.06633016 99.93 1599234.01 99.9417589 0.05824112 99.94 1625535.89 99.9611726 0.03882741 99.96 1673756 99.9838219 0.01617809 99.98 1927608.99 99.9935288 0.00647124 99.99 1961482.63 99.9951466 0.00485343 100.00

IV. C

OMPARATIVES

TUDIESThe introduced signature system should be evaluated by comparing it with the previous studies and it is shown that the proposed system has better results.

A. Recognition stage: Table 3shows the recognition rate obtained by the proposed system with those given in the previous studies.

B. Verification stage: Table 4 compares the results with the outcomes of other studies for the verification purpose.

Table 3.

Comparing the recognition rate

Reference Recognition rate

[6] 92.06%

[7] 94.8275%

Our method 98.9%

Table 4.

Comparing the verification performance

Reference Accuracy

[8] 86.67%

[13] 96.8%

Our method 100%

V. C

ONCLUSIONAn offline rotation compensation signature system is implemented in this project. The results of the tests conducted according to the proposed recognition method that is based on the density feature set with nMSD and applied on the first reference center point method lead to the recognition rate (98.9%) using number of blocks (21×21) with a 100%accuracy verification rate; the results showed that this method is successful and good.

For future works, the proposed system should be applied on a large dataset to determine the constancy of its achievement, when larger datasets are used. Also, the recognition and verification of signature can be implemented with the same rotation compensation methods but using different signature features and matching the results with the outcomes of the current scheme.

R

EFERENCES[1] SURYANI, D.,IRWANSYAH, E. and

CHINDRA, R. (2017) Offline Signature

Recognition and Verification System

using Efficient Fuzzy Kohonen Clustering

Network (EFKCN) Algorithm. Procedia

Computer Science, 116, pp. 621-628.

[2]CHOUDHARY,

N.Y.,

PATIL,

R.,

BHADADE, U. and CHAUDHARI, B.M.

(2013)

Signature

Recognition

&

Verification

System

Using

Back

Propagation

Neural

Network.

International Journal of IT, Engineering

and

Applied

Sciences

Research

(IJIEASR), 2(1), pp. 1-8.

[3] ISMAIL, I.A., RAMADAN, M. A.,EL

DANAF, T. S., and SAMAK, A. H.

(2010)Signature Recognition using Multi

Scale Fourier Descriptor and Wavelet

Transform.

International

Journal

of

Computer

Science

and

Information

Security(IJCSIS), 7(3), pp. 14-19.

[4]JADHAV, V.,KADAM, N.,KELUSKAR,

P. and KHAN, A. (2016)Artificial Neural

Network Based Signature Verification.

IOSR Journal of Computer Engineering

(IOSR-JCE), National Conference On

"Changing

Technology

and

Rural

Development, pp. 28-35.

[5]DAQROUQ,

K.,SWEIDAN,

H.,BALAMESH, A. and AJOUR, M.N.

(2017) Off-Line Handwritten Signature

Recognition by Wavelet Entropy and

Neural Network. Entropy Journal, 19(6),

pp.1-20.

[6] SURYANI, D.,IRWANSYAH, E. and

CHINDRA, R. (2017) Offline Signature

Recognition and Verification System

using Efficient Fuzzy Kohonen Clustering

Network (EFKCN) Algorithm.Procedia

Computer Science,116,pp. 621–628.2nd

International Conference on Computer

Science and Computational Intelligence,

Bali, Indonesia.

[7] KAMAL, N.N. and GEORGE, L.E.

(2018) Offline Signature Recognition

Using Centroids of Local Binary Vectors.

Third International Conference, Ntict

2018, Baghdad, Iraq in Book New Trends

in Information and Communications

Technology Applications,pp.255-269.

[8] PANCHAL, S.T. and YERIGERI, V.V.

(2018) Offline signature verification based

on geometric feature extraction using

artificial neural network. IOSR Journal of

Electronics

and

Communication

Engineering (IOSR-JECE), 13(3), pp.

53-59.

[9] AHMED, Z. J. (2018)

Fingerprints

Matching Using the Energy and Low

Order Moment of Haar Wavelet Subbands

.

Journal of Theoretical and Applied

Information Technology,

96(18), pp.

6191-6202.

[10]AHMED, Z.J. and GEORGE, L.E.

(2018) Fingerprints Identification and

Verification Based on Local Density

Distribution with RotationCompensation.

Journal of Theoretical and Applied

Information Technology,96(24), pp.

8313-8323.

[11]ZHANG, T.Y. and

SUEN, Ch. (1984) A

Fast Parallel Algorithm for Thinning

Digital Patterns. Communications of the

ACM, 27(3), pp. 236-239.

[12] YANG, J. ZHANG, D., FRANGI, A.F.

and YANG, J. (2004) Two-dimensional

PCA: A New Approach to

Appearance-Based

Face

Representation

and

Recognition. IEEE Transitions on Pattern

Analysis and Machine Intelligence, 26(1),

pp. 131-137.

[13] ABBAS, N.H. YASEN, K.N. FARAJ,

K.H.A.,RAZAK,

L.F.A.

and

MALALLAH,

F.L.

(2018)

Offline

Handwritten Signature Recognition Using

Histogram Orientation Gradient and

Support Vector Machine. Journal of

Theoretical and Applied Information

Technology, 96(8), pp.2075-2084.

[14] PRATT, W.K. (2001). Digital Image

Processing. 3

rded. Los Altos, California:

A Wiley- Inter Science Publication.

9